Anthropic eyes Fractile’s DRAM-less AI chips to cut inference costs

TL;DR

On May 3, 2026, Tom’s Hardware reported that Anthropic has held early talks with London-based chip startup Fractile to potentially buy its SRAM-based AI inference accelerators. The Information previously reported that Fractile is developing DRAM-less chips aimed at making large-model inference cheaper and faster, with commercial availability expected around 2027.

About this summary

This article aggregates reporting from 3 news sources. The TL;DR is AI-generated from original reporting. Race to AGI's analysis provides editorial context on implications for AGI development.

Race to AGI Analysis

Anthropic looking at Fractile’s DRAM‑less inference chips is a telling glimpse into where the bottlenecks really are. Training mega‑models grabs headlines, but the economic choke point is increasingly inference: serving billions of tokens a day without melting your margin on memory bandwidth. Fractile’s bet—co‑locating compute and SRAM on‑die to avoid slow, power‑hungry DRAM—goes straight at that constraint.

If even part of Fractile’s 100x‑faster, 10x‑cheaper claims survive silicon reality, labs like Anthropic could radically expand how often they run their most capable models and where they deploy them. That matters for AGI because scaling laws are converging with deployment economics; the more queries you can profitably serve, the more data and feedback you get to push the next generation of models. It also diversifies Anthropic’s supply away from Nvidia, Amazon Trainium, and Google’s TPUs, strengthening its bargaining power.

At a higher level, this foreshadows a more heterogenous hardware stack for frontier AI. Instead of one GPU to rule them all, we’ll see specialized inference silicon for particular workload shapes. That fragmentation could speed up progress by letting each lab optimize the full system—from model architecture down to memory layout—for its own AGI roadmap.

Who Should Care

Related News

Locus lands $30M Series B to expand AI logistics across 12 countries

Today

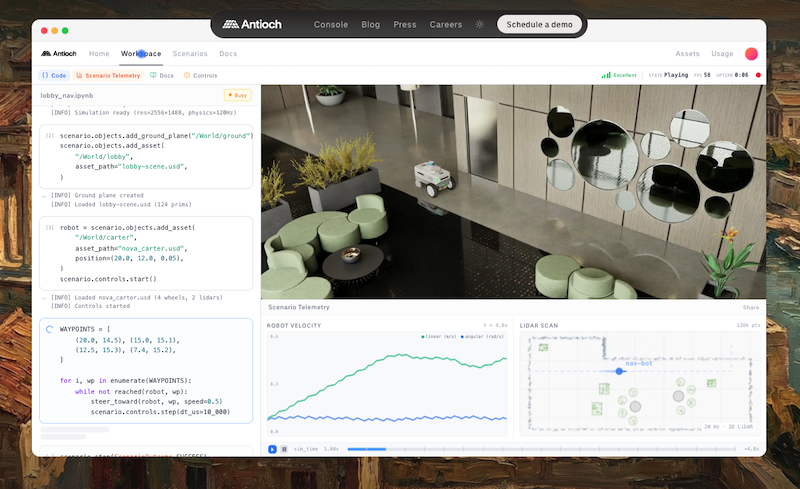

Antioch raises $8.5M to scale simulation-driven autonomy and physical AI

Today

US Treasury warns banks on AI hacking risks tied to Anthropic model

Today